Key Takeaways

- Agents behave like users, only faster and less accountable. AI agents are non-deterministic, set their own next steps, and can decide to bypass your controls, so static, admin-time entitlements alone are not enough to keep them in bounds.

- Runtime identity ties every agent action to a real user and real context. Agents must be registered as first-class identities, bound to the humans they represent, and evaluated against fine-grained, delegated access rules at the moment of action, not just when credentials were issued.

- Delegated relationships are the core of agent safety. By definition, agents act “on behalf of” someone; modeling that relationship (including human-in-the-loop approvals for high-risk actions) is essential to limit what they can do for one user versus another.

- Any agent type, any channel, same runtime control. Customer service bots, employee copilots, and customer personal agents (e.g., OpenClaw / ChatGPT Operator) all need the same treatment: treated as users, tied to their delegates.

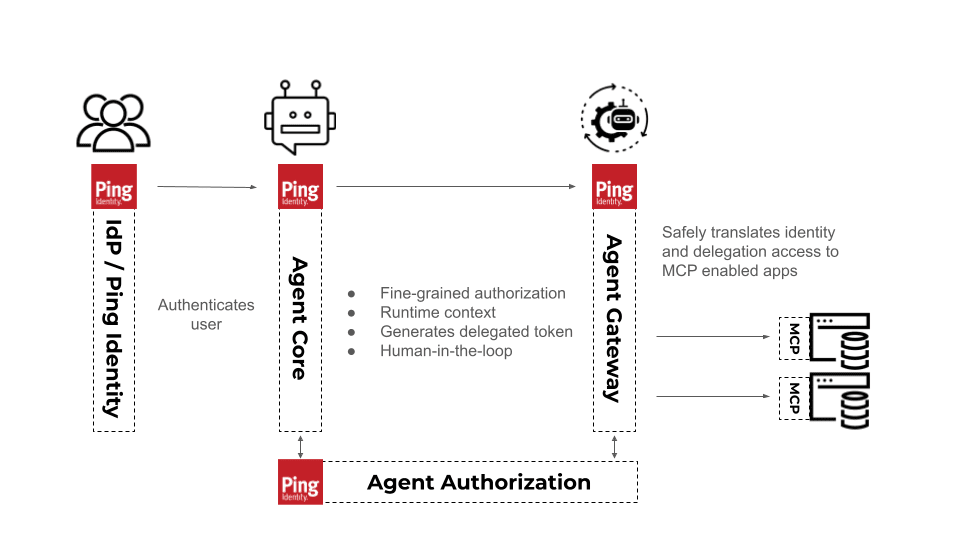

- Ping’s Identity for AI solution operationalizes runtime identity. Ping offers Agent Core to register agents, define delegation rules, and enforce runtime decisions. Ping’s Agent Gateway makes it simple to translate and enforce those identity controls in your MCP enabled apps.

There is endless opportunity for your business with agents. From opening new agentic channels of revenue that may outgrow traditional ones and giving customers instant and satisfying experiences, to multiplying the productivity of your employees. But agents come with risks.

Agents, like humans, are non-deterministic. That means they make decisions that can vary, and set their own goals and next steps. They may decide that the easiest way to accomplish the next step of their task, is to circumnavigate your security controls. What if a customer-facing chatbot decides that the easiest way to help the customer change their address, is to delete the user and create a new one with the right address? Things like this are absolutely possible in the new world of AI Agents with access to your systems.

If you’re relying on access controls based on standing credentials and the same privileges as the humans they’re acting on behalf of, that leaves gaps for their unpredictable behavior and big security risks for you! There are several key pieces that help define who an agent is, and who they’re acting on behalf of, that will help you ensure the runtime control to leverage them safely.

Get ready, as we dive into an identity-centric approach to enabling agents in your business securely that you can start using today!

What is Runtime Identity?

Runtime Identity is the practice of governing users (AI agents in this case) at execution time: agents are registered as identities, tied to their human delegates, and evaluated against contextual, fine-grained, delegated access rules with each action they take. It operates for ANY type of user or agent regardless of whether it’s internal or external.

Agents are Identities

AI agents have the same non-deterministic unpredictability in how they’ll behave that human users do, but none of the consequences if they act maliciously. Like human users, it’s critical to tie identities to your agents. Agents MUST be identified and registered as first-class citizens.

Registering users (agents or humans) is a key component of identity. It ensures that your users have the appropriate privileges. It ensures a marketing user can’t see the salaries of everyone at the company. It ensures one user can’t see another user’s information. This concept has been baked into identity solutions for decades. But agents require more.

Defining Agentic Relationships

Webster defines an agent as “a person or thing that acts on behalf of another.” That “on behalf of” is key. They are delegates, executing someone’s intent and authority. An agent (like your real estate agent, your travel agent, or your human assistant) is acting on your behalf. They may be able to make purchases for you, but not for your neighbor. Also like your human agent delegates, AI agents may need approval for high-risk actions from their human delegates. That’s human in the loop, and it’s critical for AI agents.

Identity and access management (IAM) is a specialized area of cybersecurity that has dealt with securing businesses through understanding who users are for decades. More recently, the IAM industry has defined systems for how those identities relate to each other, how they can and can’t act on each other’s behalf. Those same concepts need to be present with your AI agents.

Any User Type and Any Agent Type

Like users, agents come in many varieties. A few broad categories are:

- Customer digital assistants: These are an early, very common type of service chatbot agent that many organizations are leveraging. To be truly helpful and help businesses scale personalized support and services, they need to do more than chat. They need access to customer profiles, case history, and sensitive data without being over-privileged. They even need to be able to act on a customer's behalf, doing things like adding items to their shopping cart.

- Employee digital assistants: Businesses will fall behind if they don’t invest in and augment their workforce with AI agents. AI copilots or agents that query systems, knowledge bases, and tools on behalf of employees and help them become more efficient are becoming a critical, but often insecure, new way of life for today’s businesses.

Customer personal agents: Whether you like it or not, your customers are using tools like OpenClaw and ChatGPT Operator. Eventually, customers will shy away from businesses who don’t allow them to use their agentic personal assistants.

Businesses must cater to all of these agent types, just like they cater to their employees, partners, customers, and various types of human users. And like their human counterparts, these different types of agents have different needs and security controls that should be implemented through a single identity-aware architecture.